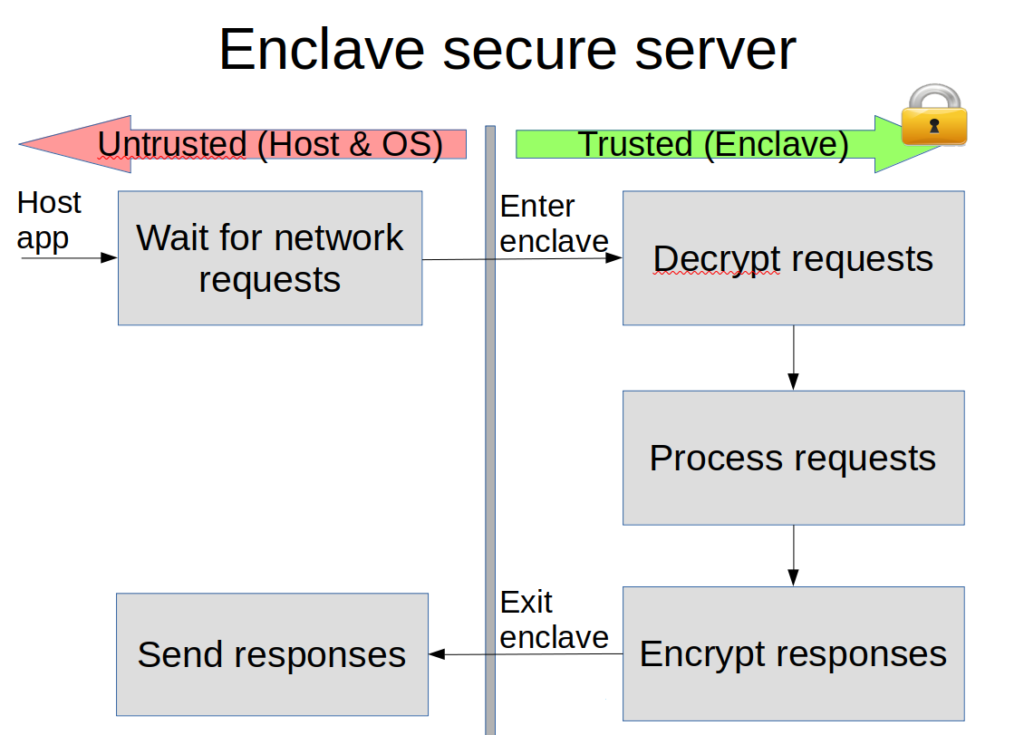

In SGX, an enclave may only run in user mode; therefore, OS services, e.g., system calls, are not directly accessible to it. Instead, applications running securely inside enclaves must exit the enclave to perform the untrusted system call and then re-enter the enclave with the result. Consider for example a secure server executing inside of an enclave. To receive network requests the enclave must transition to the untrusted execution context. Similarly, to send the responses the enclave must transition as well.

We consider the latency of enclave exit and enter instructions as the direct cost for enclave transitions. We find that they are an order of magnitude slower compared to traditional system calls. In fact, since our first analysis, the direct costs have risen in SGX since these instructions are also used to mitigate speculative execution attacks, resulting in even higher latency.

When an enclave exits, its execution state gets partially evicted from the caches and other micro-architectural buffers in the CPU. As a result, when the enclave execution is resumed, the enclave state needs to be restored. We refer to the associated overhead as indirect costs.

We identify two major contributions to indirect costs:

For example, when ignoring direct costs and comparing to native execution. Receiving large network requests that result in LLC pollution can be up to 2x slower due to higher LLC misses costs. Reconstructing virtual-to-physical mappings when traversing linked lists after enclave transitions can result in an even higher 6x slowdown due to TLB shootdowns.

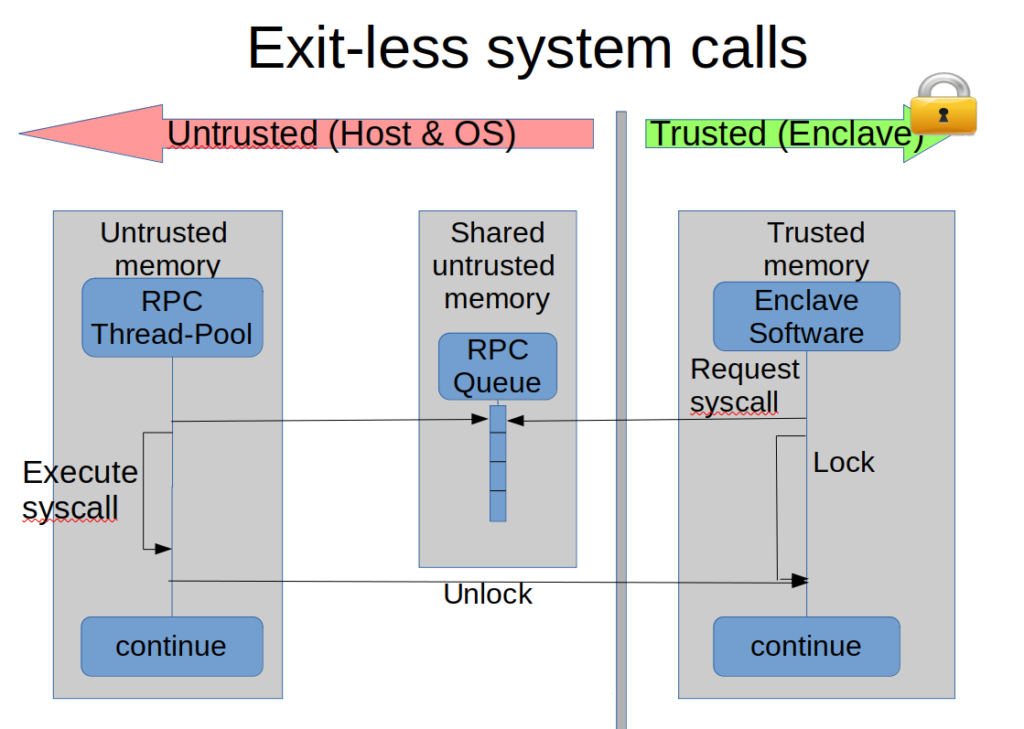

The RPC mechanism enables the invocation of blocking calls into untrusted code without exiting the enclave. The actual call is delegated to a worker thread, which executes in the untrusted context of the enclave’s owner process. As an enclave may execute multiple threads, we propose to maintain a thread pool with multiple worker threads that will serve the requests in case they happen in parallel.

The RPC mechanism enables the invocation of blocking calls into untrusted code without exiting the enclave. The actual call is delegated to a worker thread, which executes in the untrusted context of the enclave’s owner process. As an enclave may execute multiple threads, we propose to maintain a thread pool with multiple worker threads that will serve the requests in case they happen in parallel.

The thread pool interacts with the enclave via a shared job queue located in untrusted memory. To perform the call, the enclave enqueues the pointer to the untrusted function and its parameters in the job queue and blocks (via polling) until its completion. The threads in the thread pool poll the queue, invoke the requested functions and transfer the results back via the untrusted shared buffer. The enclave can then see the result of the untrusted code and continue execution, while the thread pool will wait for future requests.

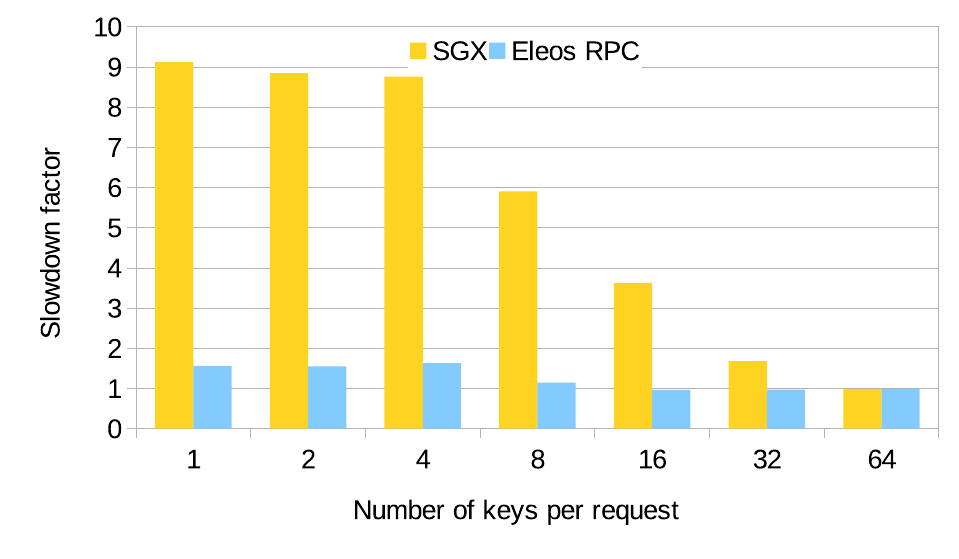

To evaluate the usefulness of the exitless system call mechanism we ran a simple hashtable workload. We measured the slowdown factor of running enclaves compared to native execution environments both with exitless system calls and system calls that require enclave transitions.

We see the exitless mechanism almost completely eliminates the overhead due to system calls. Batching requests hides the slowdown as the exit cost has a smaller overall effect.

Furthermore, our exitless system call mechanism avoids exits, which therefore avoids TLB shootdowns’ indirect cost.

Finally, we observe that using Intel’s cache partitioning technology can further reduce the indirect costs of LLC pollution by having the untrusted system software and the enclave use different portions of the LLC.